Over the past year, I'm seeing indications of what will be a big trend in cloud consumption: let's move our network to the cloud along with data centers. I'm talking about the WAN network primarily which many enterprises maintain worldwide. Local offices will still need connectivity to the WAN; it's just that they will increasingly become on-ramps to the worldwide WAN hosted in the cloud. In other words, data centers will no longer be the "center" for all network access.

Graphically, the concept of moving the WAN to the cloud would look like figure 1 below. Notice how all data centers and offices are connected to the WAN that handles traffic between them. While the image doesn't describe it, the Cloud-based WAN is worldwide and can serve offices and data centers across the globe.

Figure 1: Cloud-based WAN Network

Let's contrast this with figure 2 which depicts the WAN network topology common in enterprises today. Note that public cloud access typically routes via data centers making enterprise application access data center centric. Worldwide connectivity is managed by a custom MPLS network.

Figure 2: Traditional Worldwide MPLS Network

I'm seeing several motivations for the change in thinking about how worldwide networks should be organized. I'll separate the reasoning into the following categories:

- Complexity

- Performance

- Financial

- Speed to Market

Complexity

The complexity of non-Cloud MPLS networks, the base for most enterprise worldwide WANs, is tremendous. MPLS networks typically require large amounts of hardware that needs to be upgraded and replaced regularly. They take a large networking staff. While some outsource that to an MSP provider, they are still necessary. Outsourcing a large portion of the network to cloud vendors outsources this complexity and associated maintenance to a large degree. They also tend to be replete with numerous vendor contracts.

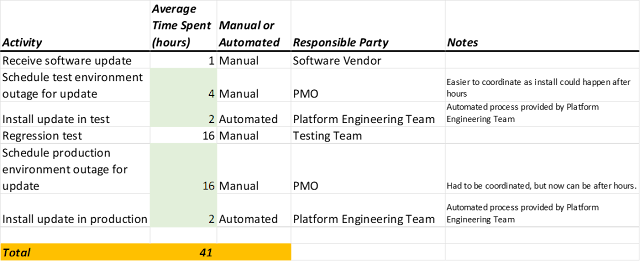

The complexity increases the business risk of change. MPLS networks are rarely supported by testing sandbox environments and automation. Many still make changes manually leading to inevitable human error and outages for users. Utilizing cloud vendors makes it much easier to automate the WAN infrastructure and provides a sandbox environment to test networking-related changes. This decreases the business risk of changes to networking infrastructure. This is huge. For most enterprises, the WAN that integrates all data centers and offices is essential.

Simpler capacity planning requirements. Hardware and vendor contacts needed for worldwide MPLS networks require sophisticated capacity planning due to long lead time requirements. This requirement is much simpler with cloud WAN implementations. Capacity planning still exists, but it is far simpler and is easily changeable and adaptable on the fly.

Performance

Network latency is generally significantly lower (faster) using cloud-provided WAN networking than worldwide MPLS networks. While your mileage will vary depending on your MPLS implementation, so much R&D goes into cloud-provided WANs that the likelihood that an enterprise will keep up any network performance advantages over time is low. Face it, most firms just can't compete.

Network latency is higher (slower) accessing resources that require networking between on premises and the cloud. As more IT workloads move from on premises to the cloud, closer proximity to the cloud will yield better performance. To this end, I see more enterprises leveraging cloud VPN services, which are closer to most application workloads, yielding better performance.

Financial

Converting networking hardware and infrastructure from capital expense (CapEx) to operational expense (OpEx) is appealing to many enterprises from an accounting perspective. As with computing resources, you pay for what you use for cloud-based WANs without hardware expenditures and management.

Networking labor is expensive specialized labor. Outsourcing that labor to cloud providers is definitively cheaper. Some enterprises mitigate this cost by enlisting a managed services provider (MSP), but outsourcing that labor to cloud vendors is cheaper as it capitalizes on the cloud's economy of scale advantages.

Speed to Market

No more long lead times for MPLS network upgrades and capacity increases. Increasing capacity in a cloud-provided WAN is typically measured in hours, not months. Furthermore, cloud-provided WAN products benefit from the cloud's dynamic scaling capabilities. Increasing MPLS network capacity takes sophisticated capacity planning and typically long lead times due to additional hardware expenditures.

Additional Benefits

The firm gets access to research and development advances made by cloud providers. The R&D resources that cloud providers are investing in WAN technologies surpass what most enterprises are able or willing to invest in. This means that over time, any differences in functionality and performance are likely to appear in cloud vendors first.

A cloud-based WAN is a natural partner when combined with a cloud-based VPN capability. This makes sense especially if the cloud hosts a larger percentage of application compute resources. Consuming the cloud-providers VPN solution moves those compute resources closer to what users access. With that closer proximity, typically comes better performance.

A cloud-based WAN is a natural partner for integrating multiple cloud providers. That is, Your AWS footprint can be securely connected to your Azure or GCP footprint directly. This avoids the slower connection between the cloud providers through an on premises data center.

Concluding Remarks

I'm reporting what I'm seeing at clients. This idea made no sense when many had a small fraction of their IT footprint in the cloud. Now that most firms now have most of their footprint in the cloud, thinking on how to provide worldwide access to internal users needs to evolve. And the time for that evolution has come.

If you have thoughts or feedback, please contact me directly via LinkedIn or Email. thanks for taking the time to read this article.